Ryan Medlin

May 3, 2026

If you're a startup shipping AI-driven software, you've probably felt the tension between two forces: AI can now generate most of the code from a small task description, but human teams are still expected to ship safe, reliable, and legible systems. Agentic engineering is the operating model that tries to resolve that tension. It is not "let the AI do all the code," and it is not "review every line." It is human-led, AI-executed product development, with clear guardrails and risk-based oversight.

Agentic engineering is a way of building software where humans define outcomes, constraints, and release decisions while AI agents generate, test, refactor, and iterate on implementation. In practice, that means a product- or domain-driven task description, an AI agent that implements the code, tests, and docs, often across UI, backend, and data models, and humans who focus on judgment, architecture, and final approval.

For early-stage companies, the main benefit of agentic engineering is time-to-learning, not code quality. AI can compress days of implementation into hours, letting teams run more experiments, collect more user feedback, and find product-market fit faster. The new bottleneck is no longer how fast you can write code; it is how fast you can define good outcomes, review the right parts, and understand what is risky.

The most valuable roles in an agentic workflow are product owners, domain experts, and engineers. Product owners define what done means, domain experts define the real meaning of key concepts, and engineers design small testable tasks, set constraints, and review the risk surface rather than every line. Specialization does not disappear; it shifts toward outcomes and bounded contexts.

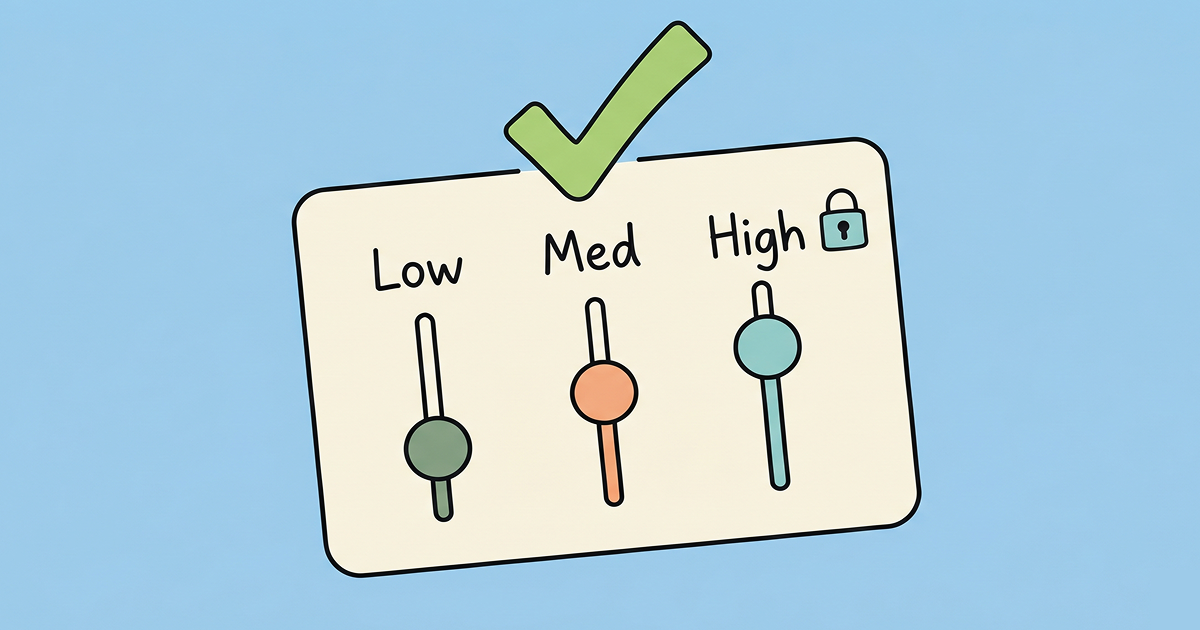

The best oversight model is risk-tiered, not ritual-based. Use the table below as a starting point for your team's policy.

| Risk Tier | Examples | Oversight |

|---|---|---|

| Low-risk | UI polish, copy changes, minor refactors, test improvements, small reversible schema changes | Linting, static analysis, CI, and AI review; light human review |

| Medium-risk | Public API changes, cross-service flows, legacy-system refactors, new features behind feature flags | Human or domain owner review after automation checks run |

| High-risk | Authentication, permissions, billing, hard-to-reverse data model changes, production infrastructure | Explicit human approval, staging, auditability, and a rollback plan |

Human review should focus on functional correctness, security and permissions, architecture and boundaries, edge cases and error paths, and observability and docs. The human role is semantic correctness and risk approval, while AI and automation handle most syntactic correctness and test coverage.

When working with old code, the best default is not to ask AI to invent a brand-new system from scratch. The better sequence is to let AI first analyze the existing code, extract the actual business rules, identify bugs and inconsistencies, generate characterization tests, and then either refactor in place or translate the code in small steps. You should rewrite from scratch only when the old subsystem is too contaminated to safely preserve or translate.

Agentic engineering is the most efficient way to ship AI-driven software in 2026, but only if the team keeps humans in the loop for security, data, payments, and irreversible changes. The best startup teams ship fast, learn faster, and use risk-based oversight instead of old-school process overload.